This results in higher variance due to smaller sample size but less bias as each will be closer to.

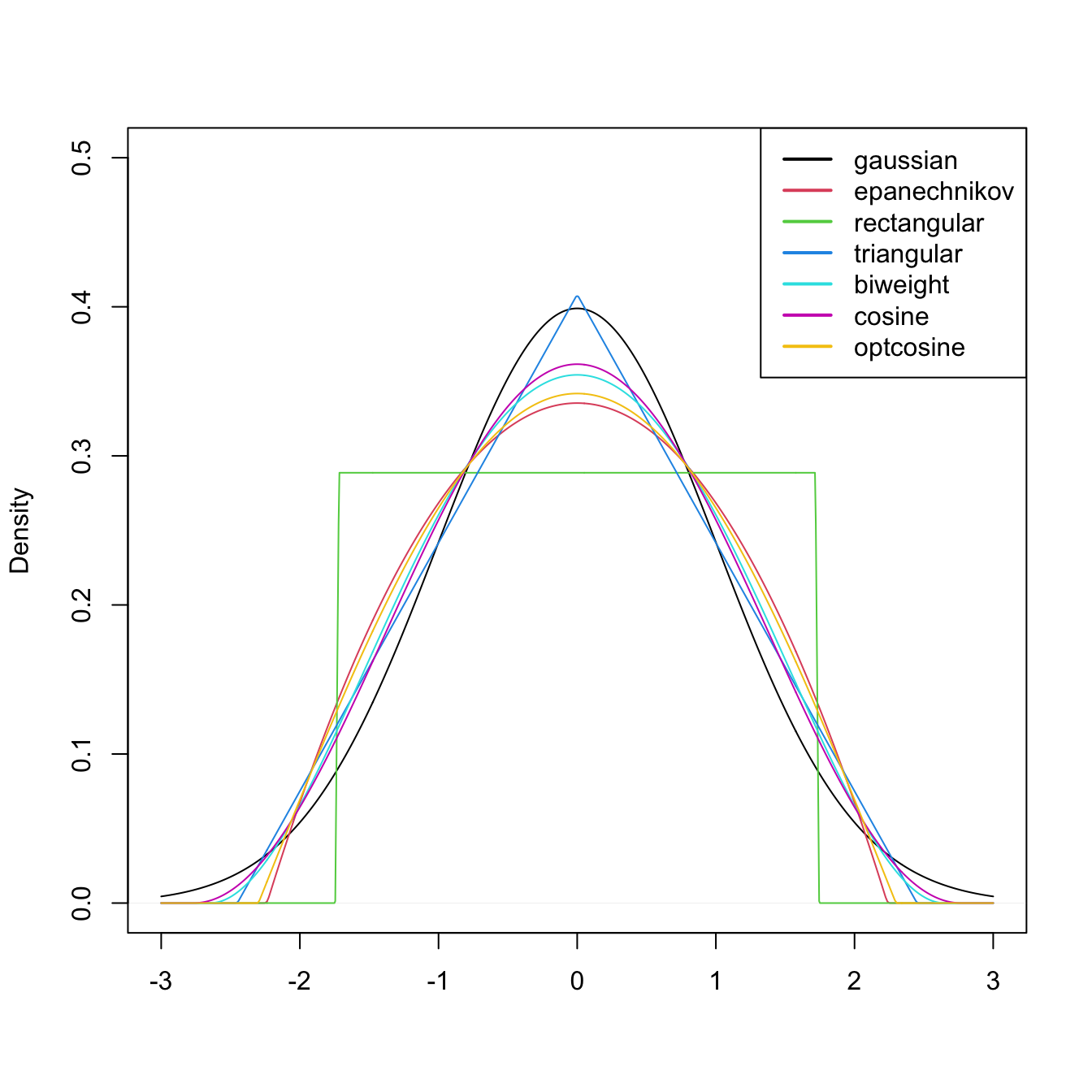

If we choose a small value of, we consider a smaller number of. The infamous bias-variance trade-off must be considered when selecting. For k-nearest neighbours, would translate to the size of the neighbourhood expressed as the span (k points within the N observation training set). In the case of a Gaussian kernel, would translate to the standard deviation. The flatter, bell-shaped curve represents which clearly oversmooths the data.Īs noted above, the bandwidth determines the size of the envelope around and thus the number of used for estimation. The jagged dotted line is the estimate of when the bandwidth is halved. The thick black line represents the optimal bandwidth. Kernel density estimates for various bandwidths. Choice of the bandwidth, however, is often more influential on estimation quality than choice of kernel. The Epanechnikov kernel is considered to be the optimal kernel as it minimizes error. Other popular kernels include the Epanechnikov, uniform, bi-weight, and tri-weight kernels. Symmetric non-negative kernels are second-order and hence second-order kernels are the most common in practice. For example, if and, then is a second-order kernel and. The order of a kernel,, is defined as the order of the first non-zero moment. The Gaussian, or Normal, distribution is a popular symmetric, non-negative kernel. Non-negative kernels satisfy for all and are therefore probability density functions. This helps to ensure that our fitted curve is smooth. The kernel function acts as our weighting function, assigning less mass to observations farther from. Kernel FunctionsĪ kernel function is any function which satisfies Our derived estimate is a special case of what is referred to as a kernel estimator. The greater the number of observations within this window, the greater is. That is, the bandwidth controls the degree of smoothing. The bandwidth dictates the size of the window for which are considered. Mathemagic!įrom our derivation, we see that essentially determines the number of observations within a small distance,, of. Since (draw a picture if you need to convince yourself)! Simplifying further, Instead, lets consider a discrete derivative. It might seem natural to estimate the density as the derivative of but is just a collection of mass points and is not continuous. essentially estimates, the probability of being less than some threshold, as the proportion of observations in our sample less than or equal to. I’m not saying naturally to be a jerk! I know the feeling of reading proof-heavy journal articles that end sections with “extension to the d-dimensional case is trivial”, it’s not fun when it’s not trivial to you. The CDF is naturally estimated by the empirical distribution function (EDF) Estimation of has a number of applications including construction of the popular Naive Bayes classifier, Our goal is to estimate from a random sample. Let be a random variable with a continuous distribution function (CDF) and probability density function (PDF) We will therefore start with the slightly less sexy topic of kernel density estimation. Many of these methods build on an understanding of each other and thus to truly be a MACHINE LEARNING MASTER, we’ve got to pay our dues. It is important to have an understanding of some of the more traditional approaches to function estimation and classification before delving into the trendier topics of neural networks and decision trees. Statisticelle on U-, V-, and Dupree statisticsĪrchives Archives Categories Categories Meta.One, Two, U: Examples of common one- and two-sample U-statistics.Much Two U About Nothing: Extension of U-statistics to multiple independent samples.Statisticelle on One, Two, U: Examples of common one- and two-sample U-statistics.Statisticelle on Much Two U About Nothing: Extension of U-statistics to multiple independent samples.Statisticelle on Getting to know U: the asymptotic distribution of a single U-statistic.Simplifying U-statistic variance estimation with Sen's structural components.Getting to know U: the asymptotic distribution of a single U-statistic.Simplifying U-statistic variance estimation with Sen’s structural components.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed